Bad Facial Recognition Leads to False Arrest

Bad Facial Recognition Leads to False Arrest

NFK Editors - July 1, 2020In 2019, Robert Julian-Borchak Williams was wrongly arrested for stealing five watches from a store. Though he didn’t do it, he was arrested after his face was “recognized” by a computer system. Now he’s making a complaint against the Detroit police.

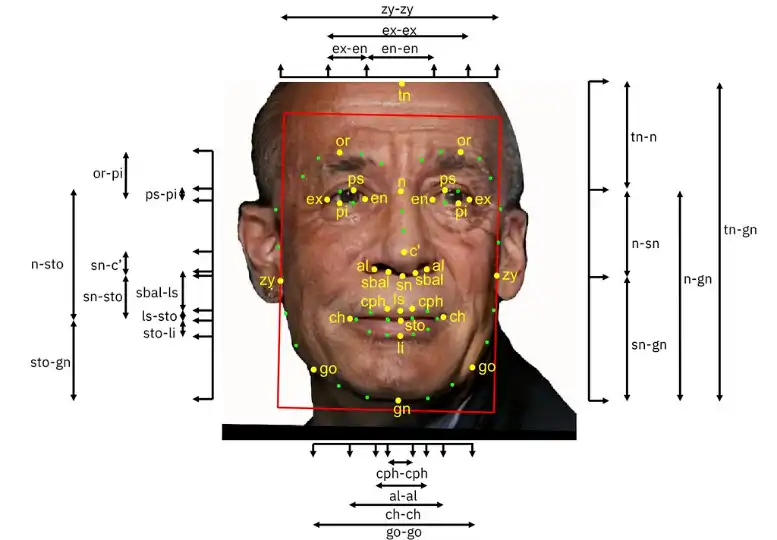

When a computer system identifies a person from their face, it’s called “facial recognition”. Facial recognition programs take measurements of important parts of the face and turn that information into a math formula describing the face. The programs then search for faces with similar formulas.

But facial recognition programs have a mixed record. The programs often recognize white men, but they’re not so good at identifying women and people with darker skin.

Facial recognition programs take measurements of important parts of the face and turn that information into a math formula describing the face. The programs then search for faces with similar formulas.

(Source: IBM Research, via Flickr.com.)

Still, many police departments use facial recognition. They believe it helps them do their jobs. In some cases, facial recognition has helped identify criminals.

But it’s not unusual for a system to create a “false match” – when the computer identifies a person, but it’s wrong. For years, experts have warned that false matches could make innocent people look like criminals.

Embed from Getty ImagesWhen a facial recognition program is wrong, it’s called a “false match”. False matches are more common with women and people with darker skin.

Mr. Williams’s arrest is the first known case of someone being wrongly arrested because of bad facial recognition.

A facial recognition program chose Mr. Williams as the closest match of an image taken from a security video. The person who sent the video to the police also chose Mr. Williams’s photo from a group of photos. But that person hadn’t been at the store during the robbery.

Mr. Williams was arrested, kept in jail overnight, and questioned. Mr. Williams said that when he was questioned, he held the picture from the security video near his face. He reports that one of the police officers then said, “the computer must have gotten it wrong.”

A facial recognition program chose Mr. Williams as the closest match of an image taken from a security video. Mr. Williams was arrested, kept in jail overnight, and questioned. The picture above is NOT Mr. Williams.

In spite of the mistake, Mr. Williams wasn’t released until hours later. Mr. Williams is now working with the ACLU (American Civil Liberties Union) to make a complaint against the Detroit police.

The ACLU is concerned because the police counted on facial recognition instead of police work. Before he was arrested, Mr. Williams wasn’t asked about where he was when the robbery happened or whether he owned clothes like those worn by the man shown in the security video.

Mr. Williams says that he wouldn’t have known why he was arrested if the police hadn’t mentioned the facial recognition “match”. He thinks there could be other innocent people who have also been wrongly arrested.

The ACLU is concerned because the police counted on facial recognition instead of police work. Mr. Williams thinks there could be other innocent people who have also been wrongly arrested.

(Source: Marco Vanoli, via Flickr.com.)

Some local governments are taking facial recognition concerns seriously. San Francisco, Boston, and several cities in Massachusetts have banned the use of facial recognition.

Even some of the companies behind facial recognition are taking a step back. IBM has dropped its facial recognition program. Microsoft and Amazon are limiting how they sell the technology to police departments.

Some companies are taking a step back from facial recognition. IBM has dropped its facial recognition program. Microsoft and Amazon are limiting how they sell the technology. Above, Microsoft president Brad Smith talks about facial recognition.

(Source: Brookings Institution, via Flickr.com.)

But most of the facial recognition tools used by police departments are made by less well-known companies which are still promoting the technology.

IBM, Microsoft, and Amazon have asked the US Congress to make laws that limit the use of facial recognition. Such laws may be the only way to keep innocent people from going to jail because of bad technology.

Check Yourself

0/4

1. Facial recognition programs often recognize white men, but aren't so good at identifying women and people with darker skin.

True False2. When the facial recognition program identifies a person, but it’s wrong, it's called a _______________.

3. The ACLU is concerned because the Detroit police counted on facial recognition instead of _______________.

4. Which tech company has dropped its facial recognition program?

Do you think police should be allowed to use facial recognition? Why or why not? Can you think of any rules that might help protect innocent people?

ResetSourceswww.nytimes.com

www.npr.org

www.theverge.com

www.nytimes.com

www.nytimes.com

www.cnn.com

Scientists at Loughborough University in the United Kingdom have created what they call “the world’s smallest violin”. The violin is made of metal and is so tiny that it can only be seen with a powerful microscope. The project was designed to test new technology for building extremely small things.